By: Affectiva staff

At Affectiva, we’re often asked about the accuracy and robustness of our facial expression classifiers in real-world conditions.

Classifiers are the machine learning algorithms that interpret and report emotion metrics obtained from the face. To our delight, this question gets to one of the core strengths of our Affdex solution.

ACCURACY IN THE WILD

With experience gained from 1000s of media tests collecting millions of face videos worldwide, we’re eager to tout our industrial-strength classifiers, capable of accurately processing facial videos gathered in the most demanding circumstances. Overall, our hardened classifiers boost accuracy and preserves panelist incidence rates in challenging real-world situations.

A DOLL NAMED SUE

Then we began to wonder: with all our focus on hardening our classifiers to account for darkened room, laptop-on-the-stomach scenarios, might we have lost our ability to accurately assess emotions in ideal environments like focus group settings and central locations? With the launch of Affdex Discovery, the Affdex solution targeted for focus groups, we wanted to make certain we did not over-architect our classifiers. After all, there is no guarantee that hardened solutions work well in less demanding environments.

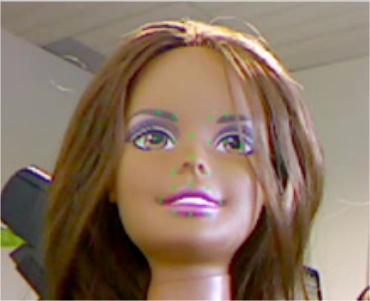

So we set about identifying alternative scenarios than those encountered “in the wild”, resulting in the tamest environment possible. To start, we bathed our test subject in perfectly diffused fluorescent light. Next, we cordoned off access to our subject to prevent interruptions, as happens when pets traipse between the webcam and subjects in online tests. Then we firmly affixed a webcam to a table, aimed directly at our subject. Finally, we set about identifying a subject who behaved unlike normal respondents. We wanted a subject not prone to squirm, yell at their children, watch TV, smoke, or chew gum.

We settled on a doll.

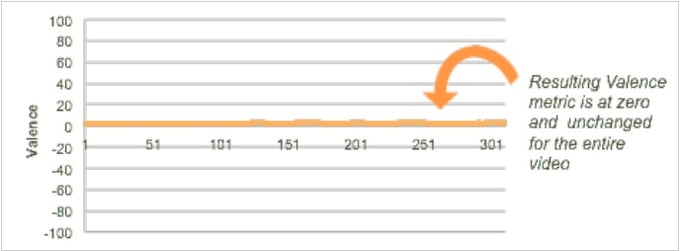

An important characteristic of our choice of Sue was her unchanging neutral expression—we were very interested the baseline performance of our classifiers. Sue definitely met this criterion.

Armed with a test scenario and a subject unlike anything we’d ever encounter in the wild, we embarked on a test of Sue’s reaction media content. Not surprisingly, Sue didn’t react much at all. In fact, she seemed rather bored with it all. And, to our delight, our classifiers reflected this condition perfectly.

This test, in concert with the 1000s of tests conducted online and in central locations worldwide, confirms Affdex classifiers’ ability to accurately track and process facial expressions across a wide range of conditions, from pristine media labs, to focus group settings, to dimly lit basements. By baselining subjects and uncovering movements away from the baseline, Affdex accurately reflects people’s true emotional state.