By: Rana el Kaliouby, Co-Founder and Chief Strategy and Science Officer; Gabi Zijderveld, VP Marketing and Product Strategy

We just released the latest version of our emotion-sensing software development kit: Affdex SDK version 3.0.

This exciting new product was a cross-team effort that we are really proud of: from data collection and labeling, to training new algorithms and emotion metrics, to architecting our SDKs so that it is easier to use by app developers. We are excited to share it with you all.

Our emotion-sensing and analytics technology is the next frontier of Artificial Intelligence (AI). Our Affdex SDK enables developers to build emotion-aware AI by integrating emotion-sensing and analytics into their own digital experiences and apps, so that these instantly respond to users’ unfiltered emotional feedback.

The Affdex SDK has been built on Affectiva’s industry-leading patented science. It is in our science that we truly push the boundaries of innovation in AI. Using state-of-the-art Computer Vision and Deep Learning methodologies, we develop emotion algorithms (“classifiers”) that are trained and tested for broad coverage of very nuanced emotional expressions and high accuracy. In all this work we leverage our data repository – the world’s largest emotion database with more than 3.8 million faces collected from people that span 75 countries, amounting to over 40 billion data points.

SO WHAT’S NEW IN AFFDEX SDK 3.0?

- 1) A new API to allow tracking of multiple faces simultaneously.

- 2) Identification of gender and presence of eyeglasses or sunglasses.

- 3) The world’s first

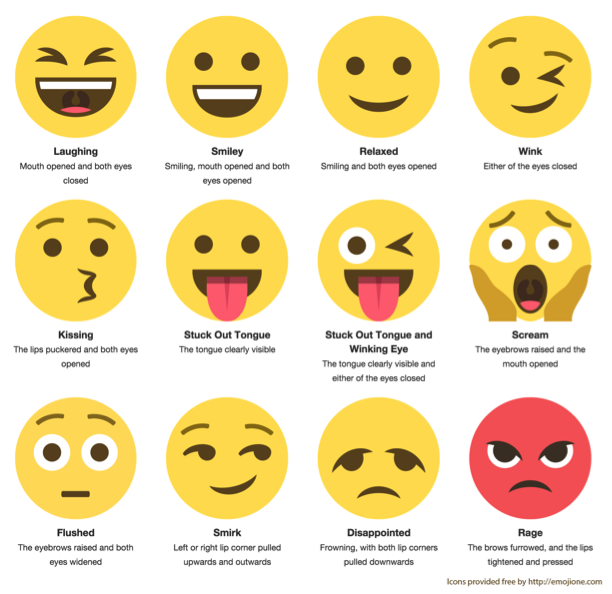

- to allow developers to map facial expressions of emotion to 12 different emojis plus a neutral emoji.

As with the previous versions of our SDKs, you can still detect 7 emotions and 15 highly nuanced facial expressions, as well as two alternative metrics for measuring the emotional experience: valence and engagement. Affdex SDK 3.0 is cross-platform and is available for Android, iOS and Windows platforms (contact us directly for Linux or Mac OS X). All the processing is done on device – no videos or images are sent to our cloud.

Here is a bit more detail on these key new features:

1 – MULTI-FACE TRACKING

With this new capability, developers can track the emotional response of an audience. With the right type of camera, you can now measure and aggregate the mood of a crowd in a variety of venues – retail stores, movie theater or sporting event. This allows you to understand how groups of people respond to digital content, media, messages, experiences, etc. Taking that emotion data developers can then power a unique action or insight in their app or digital experience.

See multi-face in action in this short video here.

2 – FACE TO EMOJI MAPPER

Emojis have become one of the most common forms of human expression in digital communications. Yet, today, you still have to take an explicit action to communicate an emoji by selecting one from a list – not very spontaneous.

In SDK 3.0 we have created a novel way to map facial expressions of emotion to emojis. TheFace to Emoji Mapper works by accessing the device camera or webcam. It then finds a person’s face and reads their facial expressions of emotion. Those are then mapped to an emoji in real-time, such as a wink or kissing. When integrated into an app or digital experience, the Face to Emoji Mapper lets users generate emojis with their face. As you can see from the image above, we can map 12 different emojis and a “neutral emoji”.

See a demo video of how this exciting functionality can work here.

Basically, this is a fun and experimental feature of our SDK. We are still working on fine-tuning the accuracy, but we thought the Face to Emoji Mapper is such exciting and unique functionality that we wanted to get it out there to see what developers can build with it. That would then allow us to tune it to specific use cases.

The Face to Emoji Mapper can add value to a variety of communication platforms, including messaging, live streaming, ephemeral social media and video communications. Here are some examples:

Text messaging: For example, when you are texting and the person on the other end says something surprising, instead of going through a list to pick the “Flushed Face”, our technology can now see your facial expression and map that to the right Emoji for you on the spot. This makes for a much faster and spontaneous communication exchange.

Ephemeral Social Media: For example, you get a 5-second video on your social app like a SnapChat and your first impressions get captured immediately and translated in an emoji that gets sent back to the sender

Live Streaming: Using a live streaming app you share a cool life experience with a group of friends, who can in return share their reactions by generating emojis in real time. By being able to share these true, unfiltered emotions on the fly, using the Face to Emoji Mapper, we create more empathic and engaging experiences.

3 – GENDER AND GLASSES CLASSIFICATION

We have added a gender classifier to indicate whether a face is male or female. Note that our gender classifier does not attempt to classify biological sex or gender identity, rather it classifies human perception of gender expression. We have also added a classifier that indicates whether a person in a still image or video is wearing eyeglasses or sunglasses.

We have a number of customers that are very interested in gathering gender data along with the emotion metrics. For example, agencies who are building novel retail experiences that personalize content based on observed gender. Also human behavioral researchers are interested in this data.

WHAT EMOTION-AWARE AI WOULD YOU BUILD?

Emotions influence everything we do, yet in a digital world full of intelligent and hyper-connected devices, emotions are missing. Affectiva is on a mission to realize emotion-aware AI. This will transform not only how humans interact with technology, but especially how humans interact with each other, when these interactions take place online. Bringing emotional intelligence to the digital world is the next frontier of Artificial Intelligence.

Affdex SDK 3.0 enables developers to emotion-enable their digital experiences, bringing us closer to a world of machine emotional intelligence. Here’s to a world where technology not only has IQ but EQ as well. We can’t wait to see what you will build.

*UPDATE:

Affectiva is focused on advancing it’s technology in key verticals: automotive, market research, social robotics, and, through our partner iMotions, human behavioral research. Affectiva is no longer making it’s SDK and Emotion as a Service offerings directly available to new developers. Academic researchers should contact iMotions, who have our technology integrated into their platform.