Affectiva Announces Breakthrough in Pet Emotion Market

The pioneer in emotion recognition releases long-awaited Feel-ine™ SDK

Boston, Massachusetts: The global leader in emotion recognition announced this week that they are entering the rapidly-growing pet emotion market. After years of groundbreaking work in emotion-enabling the interaction between machines and humans, the Emotion AI leader will expand their deep learning algorithms to include analyzing the enigma that is the feline mind. This unprecedented technology can be integrated with both software and “Internet of Things” hardware devices using Affectiva’s Feel-ine™ SDK, released today.

“Since the inception of Affectiva, our work has been based upon the knowledge that emotions are the constant undercurrent of human nature,” Affectiva CEO and Co-Founder Rana el Kaliouby said. “Enabling the devices around us to recognize our emotions can only enhance our interaction with machines, as well as among the family of humanity. But what about our pets, who are just as much a part of the family--shouldn’t our environments adapt to their emotions as well? We believe the answer is Yes.”

Since studies have shown that humans and cats have identical brain regions for emotion, the company’s science team felt that the cat was the natural choice for their first venture into detecting emotions in animals. Unlike dogs, who wear their emotions on their figurative sleeves, cats have a more complex, subtle range of emotions, such as Disdain, Indifference, Jealousy, Distrust, Petulance, and occasionally Affection.

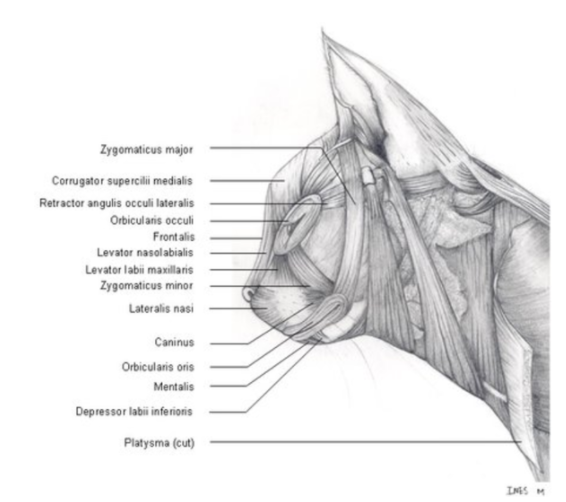

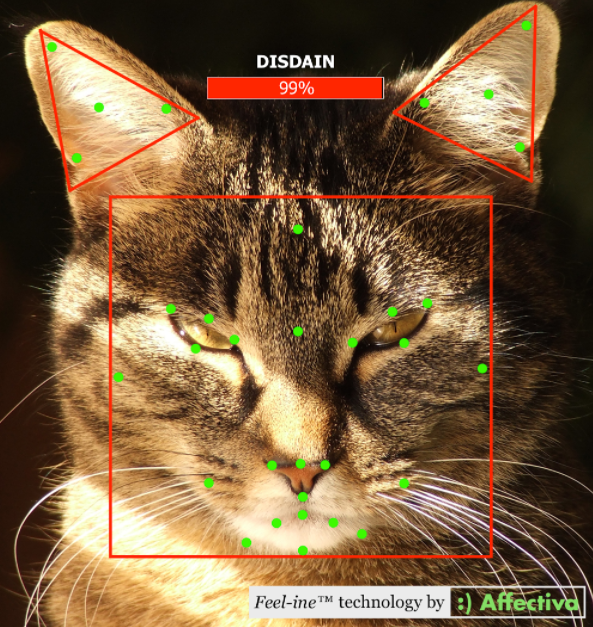

The science behind this technology is very similar to that developed to create the largest emotion repository in the world. For humans, the Affectiva science is based upon Paul Ekman’s Facial Action Coding System (FACS). Mapping these facial action units to emotions has been the expertise of Affectiva’s deep learning algorithms and manual labelers for years. Understanding emotions for cats was simply a matter of creating a system using the facial landmark equivalents from their human counterparts. Building upon the work of British researchers who had already developed CatFACS for tracking feline facial expressions, Affectiva has added a previously-lacking layer, analyzing the cat’s emotional state.

Facial musculature of the domestic feline

The largest hurdle (other than getting the test subjects to sit still) was mapping facial landmarks onto animal faces amongst the fur. But once this was done, the emotion analytics came naturally, adapting from the current system.

“The process of establishing ‘ground truth’ by labeling feline emotions was a unique one,” said May Amr, Director of Operations and Senior Data Engineer at Affectiva’s Cairo office. “Since there is only a limited amount of classifier training that can be done from photos and videos, we had to bring a group of test subjects into our office for a few days for 3D facial analysis. Keeping that group under control and focused on the camera was very demanding. It was like herding cats.”

The Feel-ine™ SDK will integrate with apps on any device with a camera to capture and react to true animal feelings. This ease of integration means the family social robot will now adapt play with pets based on the emotions detected. Market researchers can now measure how cats are responding to litter, catnip, food, and squeaky toys, influencing product development for each. Pet adoption centers could easily install a system that will assess a cat’s emotional connection with potential owners for a perfect match. For consumers with smart-home devices, these systems will be able to adapt temperature and lighting settings to the mood of the pets present in the home while their humans are absent. All can be integrated with home monitoring apps, so users will be able to play soothing music or remotely dispense a treat when they sense their pets are emotionally distressed.

Emotion analysis and facial landmark tracking example for “Mr. Fluffy Boots”

“I’d have to say that assuring the quality of this SDK was one of the greatest challenges I’ve faced in my career,” said Steve Hunnicutt, Senior QA Engineer. “The sheer number of breeds that had to be supported made testing across Android devices seem simple by comparison. Some of the testing was--well--unconventional. Still, I like to think that these claw marks will heal, but the scientific progress we’ve made will last forever.”

By simply utilizing a camera harness with Wi-Fi connectivity, cat owners can use their mobile device to view a live stream of their feline friend’s emotional state

This is just the beginning of reading pet emotions. A multitude of animal lovers have already approached Affectiva to help them understand feelings of their pet companions outside of feline capabilities. Now that the more intensive work has been done for cat emotion classifiers, the next logical step would be to create an equivalent SDK for dogs. Early research indicates that this would include a smaller set of classifiers with a preliminary set so far: “Sad You Left”, “Happy You’re Home”, “Pet Me”, “Feed Me”, “Walk Me”, “I Didn’t Do It”, “Gotta Pee” and “Squirrel!”. Some of these can be determined by simply tracking the tail.

To achieve global animal emotion diversity and equality, Affectiva currently has more ambitious plans on the roadmap for building facial expression systems for other animals which have been the subject of frequent requests from our customer base, such as horses, iguanas, and goldfish.

Read more at affectiva.com.