Guest blog by: Bryn Farnsworth, Science Editor at iMotions

When someone says that your emotions are written all over your face, this isn’t just a convenient phrase, but also a fact. Our facial expressions relay our inner world of emotions to the outer world - we are often much more transparent in showing our emotions than we might realize. By translating these expressions into defined units, we can peek inside someone’s inner world, and get a better understanding of how someone is feeling.![]()

Facial expression analysis does exactly that - it takes facial expressions as discrete events, defines them, and allows them to be quantified. Taking this approach adds scientific rigor to the understanding of emotions, across time.

In some senses, there is nothing new about this – systems such as FACS have been in place for decades, that determine the muscular movements of the face, and which combination of them is the result of which emotion.

Manual Facial Coding

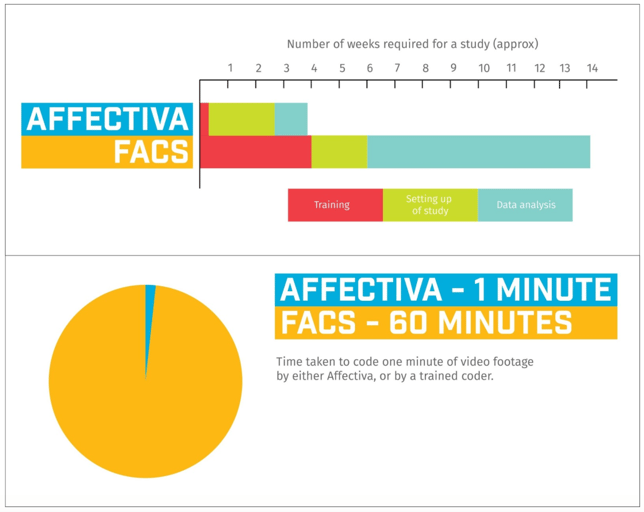

Typically, trained coders would laboriously pore over frame-by-frame video recordings, noting down each and every facial muscle that would flex, stretch, and twitch. As you’d imagine, this takes a lot of time. Having to view minutes, or hours, of human facial expressions on a moment-to-moment basis drags out quick movements into long assessments.

As with any system that is completed by humans, inconsistencies will emerge as a result of different views and perceptions. This is usually counterbalanced by having multiple human coders look through the same clips - a process that multiplies the time (and cost) to an already lengthy procedure.

Automated Coding

Fortunately, there are speedier ways through the process. Affectiva provides a platform for automatic facial expression analysis - this can be done reliably in real-time, freeing you up from the multiple rounds of extensive coding that would otherwise have to take place. The difference in time required really is startling - that’s why we’ve put together a chart showing the approximate differences in time burden that each process requires.

Affectiva vs. FACS

See for yourself below the difference in time between real-time computer-automated coding, and human coding.

About Bryn Farnsworth

Hi there! I'm the Science Editor at iMotions. I've previously spent my time as a neuroscientist / psychologist, where I found and developed my love for good science. I have a PhD in neuroscience and developmental biology, alongside a bachelor's degree in psychology, and a master's degree in cognitive and computational neuroscience. I'm a big fan of the brain and mind. I believe in the power of well-captured data to provide answers about who we are, what we think, and why we behave in the way that we do.

Hi there! I'm the Science Editor at iMotions. I've previously spent my time as a neuroscientist / psychologist, where I found and developed my love for good science. I have a PhD in neuroscience and developmental biology, alongside a bachelor's degree in psychology, and a master's degree in cognitive and computational neuroscience. I'm a big fan of the brain and mind. I believe in the power of well-captured data to provide answers about who we are, what we think, and why we behave in the way that we do.