How do you get a computer to recognize human emotion and facial expressions, just from a video? What if your car knew when you were drowsy, or if a video game adapted based on your reactions? At Affectiva, we use machine learning to solve these pr![]() oblems. Our machine learning system has access to over four million videos of people smiling, laughing, frowning, getting surprised and a range of other emotions to learn how to recognize human emotions.

oblems. Our machine learning system has access to over four million videos of people smiling, laughing, frowning, getting surprised and a range of other emotions to learn how to recognize human emotions.

![]() Machine learning requires external knowledge. We’ve taken a subset of over 60k of those videos and watched them carefully to annotate the emotions and expressions. We feed these annotations into our machine learning algorithms and the system builds off the combined knowledge. It’s quite a monumental task and the largest one out there. In the academic research world, the largest data set is in the low thousands of videos used.

Machine learning requires external knowledge. We’ve taken a subset of over 60k of those videos and watched them carefully to annotate the emotions and expressions. We feed these annotations into our machine learning algorithms and the system builds off the combined knowledge. It’s quite a monumental task and the largest one out there. In the academic research world, the largest data set is in the low thousands of videos used.

We use something called the Facial Action Coding System (FACS), developed by Paul Ekman, to label facial expressions. There are 24 core facial actions that occur independently on a human face. FACS is a coding system to represent what’s occurring on the face without any assignment of the meaning, or emotion, behind the facial movement. An example would be a certain degree of brow furrow, or lip corners being stretched to a certain degree. According to Jay Turcot, Director of Applied AI at Affectiva, “It lets you actually describe exact facial movements as you see them. So if you gave someone a full series of FACS codes, you could theoretically make a puppet, generating the exact facial expression to match somebody else's.”

Different people can disagree on some really subtle or confusing expressions, and that’s part of the challenge in creating such a system. So we have at least three different FACS trained Affectiva employees look at each of the videos we annotate. We check for consistency in labelling between the different reviewers.

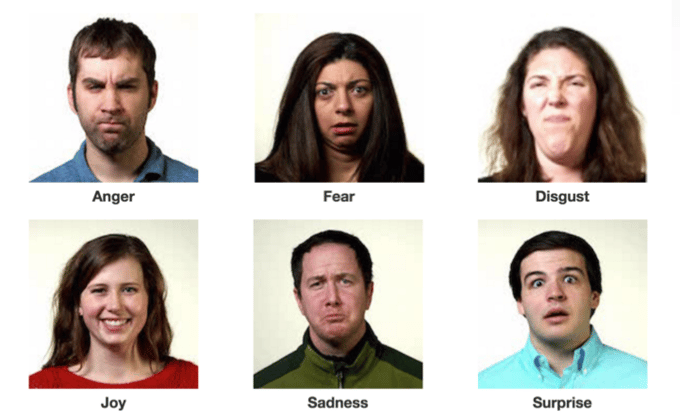

With all of this effort, Affectiva can now detect 21 human facial expressions that map to six basic emotions: disgust, fear, joy, surprise, anger and sadness. Part of Ekman's research showed that these core expressions are universally recognized and inherent - babies smile when they’re happy, they raise their eyebrows and open their eyes wide when they are surprised. Affectiva has created a system of detection around these core expressions.

While the six basic emotions offer one way of summarizing what occurs on the face, many other mental states manifest on the face including confusion and concentration. Facial gesture expressions can be used to add emphasis when conversing. Ultimately, having a system that detects different expressions provides the ability to learn and describe all the complex messages being conveyed by humans. Ultimately, proper interpretation of facial expressions will require more information, specifically the context in which the expression occurs. Is the person having a social interaction, a conversation, interfacing with an app or watching a video?

We believe that it's important to decouple the description of what is occurring (the expression) from the interpretation of what that expression means. FACS offers us a clear and concise description of what occurs and allows us to continue to refine the sensing of individual expressions while working with partners to improve our ability to interpret the facial expression.

Our next big challenge is to further extend our understanding of the human face beyond the core emotions. For example, vertical applications like automotive and emotionally responsive gaming are ripe areas for understanding how drivers and game players are interacting with the road, digital interfaces, other people and other gamers. We’re looking for industry-based partners to help us further our technology. Please let us know if you’d be interested in partnering with us by emailing partners@affectiva.com.