By: Boisy Pitre, Mobile Visionary

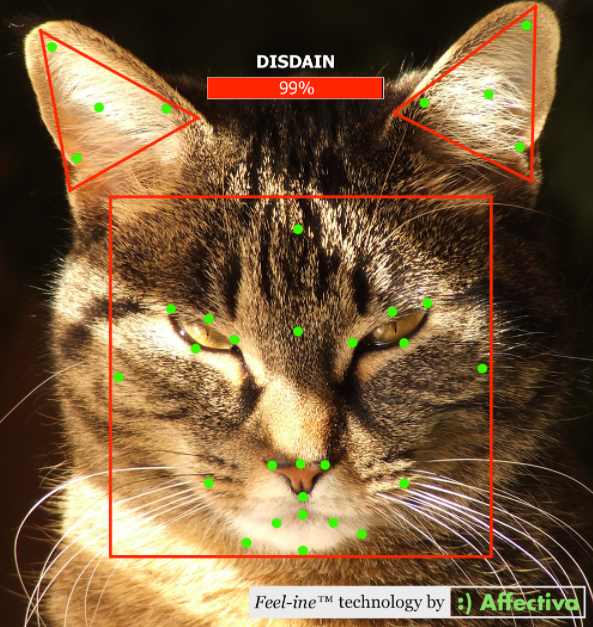

When emotions and computing are mentioned in the same breath, it’s usually assumed that our devices will detect our emotions in an attempt to provide us feedback.

For instance, if you’re feeling down, an upbeat song might be suggested. Or, given your proximity to a previously-visited location where you had a recent positive experience, a product or service might be recommended. These are examples of building an emotional profile to enrich your own life experiences, and Affectiva technology is certainly applicable in these use cases.

An interesting but perhaps less explored area is emotions as a control mechanism — your feelings become a cause which in turn brings to life an effect. That’s what Ben Anding, an Affectiva Face Analyst, and I decided to do. Putting on our hacker hats, we melded embedded computing with the technology of emotion sensing. The results demonstrate just how emotions can be used to control external devices.

THE IDEA

The idea was simple: map the various facial expressions to individual lights on an LED tower, with the intensity of the expression reflected in the degree of lighting.

LED towers are utilized in various manufacturing and safety applications — they are usually stacked with various colors. In this case, there are four: red, orange, green, and blue. Each can be driven up to 24 volts, achieving maximum brightness, with any voltage in between resulting in a dimming effect.

HOW WE DID IT

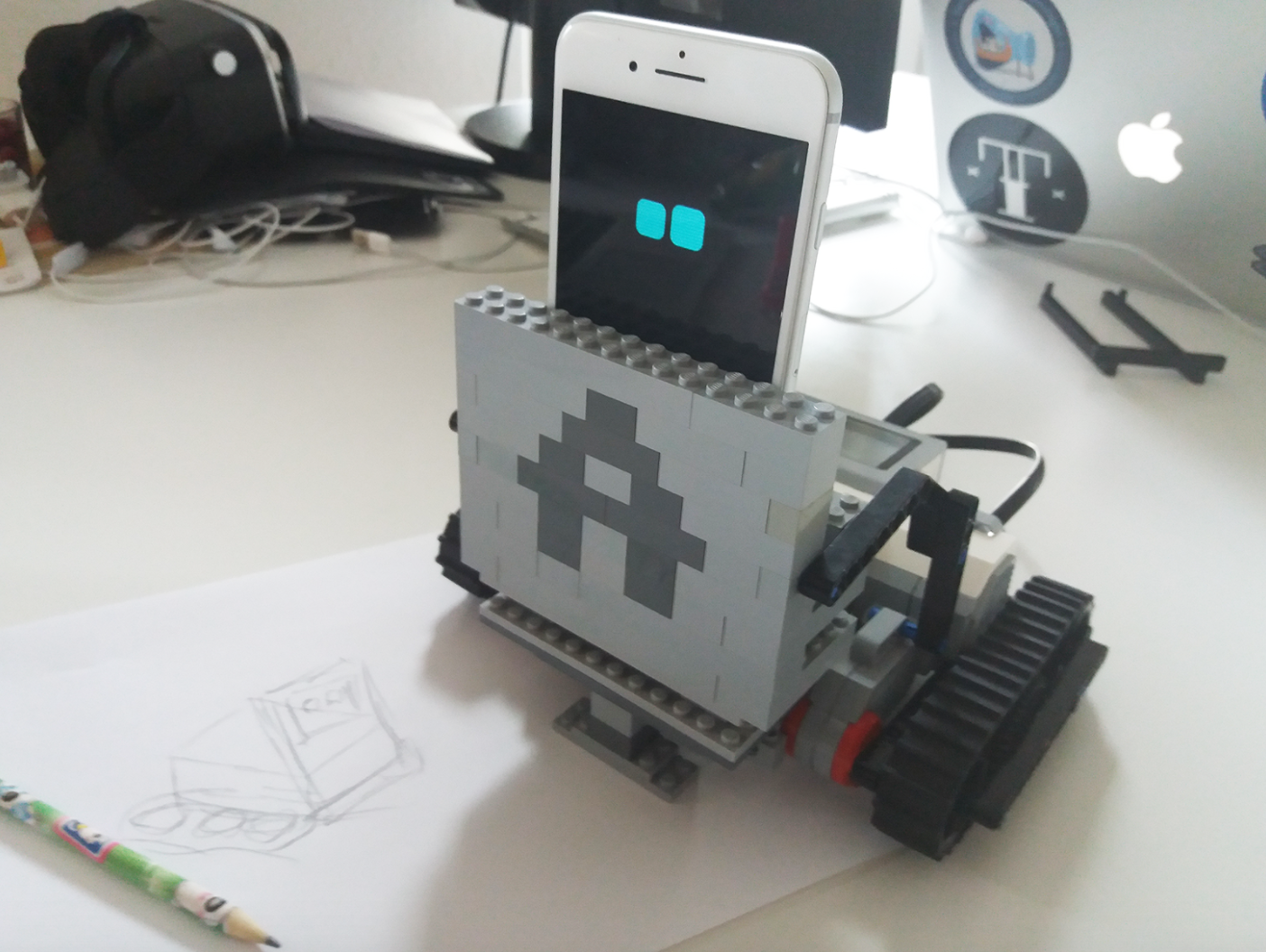

One of the mainstay platforms for electronics prototyping these days is the Arduino. Based on the Atmel AVR family of embedded micro controllers, an Arduino provides a solid hardware and software platform to develop upon. Arduino boards mate up to a variety of external interface modules, known as shields, which add all sorts of functionality — from networking to storage and everything in between.

For this project, an Arduino Mega2560 board was used, along with an Ethernet shield (to provide networking capability) and a LED shield to control power to the LED tower. The LED shield contains a number of Field-Effect Transistors (FETs) which allow a variable amount of voltage to be applied. One LED light on the tower was tied to one FET on the board, for a total of four FETs.

Our own Affectiva AffdexMe iOS app was extended to broadcast facial expression data as it occurred, over a UDP multicast channel. These packets were then intercepted by the Arduino through the Ethernet shield and used to adjust the voltage for each LED based on the intensity of the expression.

The result is that the tower LEDs respond nearly instantaneously to facial expression changes by the participant. And that’s just one method of interfacing we chose. With the plethora of Arduino shields out there (motor shields, relay shields, and more), our emotions could be used to set “mood lighting” in our homes or do other cool home automation tasks!

THE TAKEAWAY

This “emotion tower” is a very simple but effective example of how facial expressions of emotion can drive actions in technology, controlling devices, appliances, mechanics, apps and even business processes.

As the “Internet of Things” takes shape, our emotions are certain to be part of the data profile. We can then become more aware of our own emotional intelligence quotient (EQ) and let our devices respond to those emotions in a way that can benefit us.

YOU CAN DO THIS, TOO!

At Affectiva we have made our SDKs for iOS, Android, and Windows available for a 45-day free evaluation so that makers with great ideas can emotion-enable their inventions.

Go ahead – download it from affectiva.com/sdk and give it a spin!