From our cars to our phones, artificial intelligence continues to surround us, getting a foothold in our everyday technology. As we enter the holiday gift shopping season, there’s another application for AI that’s gaining appeal among the upcoming generation: artificial intelligence in toys. In fact, a recent article in Time magazine discusses how artificial intelligence is further invading our homes in our children's playthings.

It’s not a new concept, as children mimic our fascination with technology - playing with our smartphones or iPads is something that they have literally grown up with. And as toys continue to evolve, it begs the question of where this new wave of toy technology will take us. As an emotion AI company, we can’t help but speculate on the future ways of keeping the little ones entertained in the years to come: what if that hit toy this year was emotion-enabled? What if that toy could not only recognize a child’s emotional behavior, but adapt and react to these emotions -- and at the same time, enhance learning and development?

Here are some ideas we have on how to take some new and classic toys to the next level by infusing them with emotion:

1) Emotion in Toys for Babies and Toddlers. Simple stuffed toys have gotten a tech upgrade years ago with interactive stuffed toys, like Teddy Ruxpin, which play recordings when children press sensors on the toys. But what if these toys had a built-in emotion recognition capability where they could not only understand and interpret children’s emotions - either with vocal or facial emotion recognition - but were able to react with appropriate responses? AI is getting close, and with tools like our emotion recognition SDK, a child’s favorite song could start playing (or another soothing response) when a mini optical sensor picks up that little lip quivering.

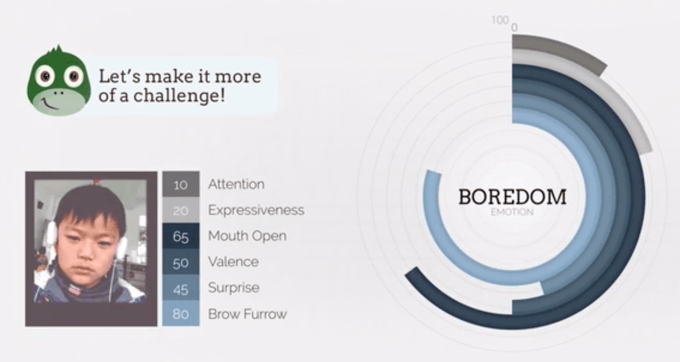

2) Developmental Learning and Education Toys. Companies like Leapfrog, which started in the ‘90s as the beginnings of today’s toy tech began to develop, have a spread of products designed to help children reach their developmental milestones. From interactive “laptop”- like toys to apps designed for each age group, this category of toys strives to educate while entertaining. Now imagine if these toys were able to recognize and dynamically react to the emotion of children playing with them: if children were getting bored, the toy could start a new game. Or if the child looks confused, an app could pause to explain the activity or do the activity again.

Learning apps like Little Dragon have already taken this technology and applied it to educating children, with the mission of making learning more enjoyable and more effective via universal and personalized tools. Integrating Affectiva’s emotion recognition SDK, Little Dragon is able to detect facial expressions, body movements and position relative to the screen. The app analyzes this stream of data alongside other signals to infer emotions that are relevant to the process of learning. Little Dragon’s AI then adjusts to and anticipates the user’s state to keep kids engaged.

3) Emotion in Tech Toys for Younger Kids. Toys with a theme of “taking care of” - such as lifelike baby dolls that mimic parenting skills for children - have also come a long way in terms of features. The same “parenting” concept has been reincarnated with Hatchimals, one of the hottest toys for the 2016 holiday season. Intended for children ages 5 and up, the toy is designed to “live” through several developmental stages that their owners must help them to grow through. It comes in egg form and must be nurtured to hatch, then “parented” through hatchling, infant and kid stages - and they are programmed with games like versions of “Simon Says” to prolong the fun.

Imagine the world of possibilities where Hatchimals were aware of their owners’ emotions, and could adapt accordingly. If a child was sad, their Hatchimal could ask to play a game to cheer them up, require they be fed to try and take their minds off their troubles, or simply do something adorable. Or, if they were just happy, the Hatchimal would be too.

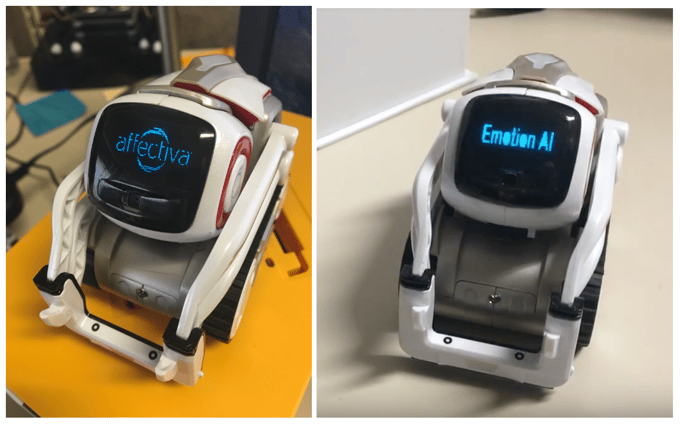

4) Emotion Tech Today: Social Robots. Not all of the use cases above are completely theoretical. Robots like Tega today already have the ability to sense and respond to the affective content of facial expressions. Emotion AI applications can also help existsing robots like Mabu and Cozmo take their engagement with their humans to the next level with emotion recognition and dynamic responses.

The educational social robot Tega, out of the MIT Media Lab Personal Robots Group, for example, has a built in a component which combines the emotional data collected with a reward signal, which feeds into an affective reinforcement learning algorithm that guides the robot’s behavior. In tests, the robot played a game and personalized its motivational strategies (based on affective data) to each child. As a result, children learned new words from the repeated tutoring sessions, and students who interacted with a robot that personalized its feedback strategy showed a significant increase in valence - which is the intrinsic attractiveness (positive valence) or aversiveness (negative valence) of an event, object, or situation. This integrated system of tablet-based educational content, affective sensing & policy learning, and an autonomous social robot holds great promise for a more comprehensive approach to personalized tutoring.

The Bottom Line

It’s an exciting time to be in the toy industry, with all the innovation and engagement that artificial intelligence and emotional adaptive capabilities can provide today. From our perspective here at Affectiva, the sky’s the limit for the creative minds behind these toys. The potential for toys to one day react to the child’s (or their parents’!) emotions as they are engaged in play is an exciting place to build predictive and reactive experiences.