By: Jeffrey Lu, Software Engineer Intern

The new JavaScript SDK from Affectiva makes it easier than ever for developers to integrate emotion-sensing technology via your browser!

As an intern at Affectiva this summer, I was initially tasked with the job of updating a previous demo that was written using the SDK. This demo involved capturing your emotional reaction while playing a game for a short amount of time, and the code itself was unwieldy, consisting of logic to start the webcam, set up a web worker, and send and receive frames for processing. Using the SDK, however, I was pleasantly surprised to find all this logic wrapped up in several lines of code:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

|

// get the div where the face video will appearvar face_video = document.getElementById("face-video-div");// initialize the CameraDetectorvar detector = new affdex.CameraDetector(face_video);// choose emotions to detectdetector.detectAllEmotions();// start the detectordetector.start();// add callback for when the detector is initializeddetector.addEventListener("onInitializeSuccess", function() {...});// add callback for when a frame finishes processingdetector.addEventListener("onImageResultsSuccess", function(faces, image, timestamp) {...}); |

I came to see the “emotions detector” as a black box: pass in a frame for processing and get back emotion data for that frame.

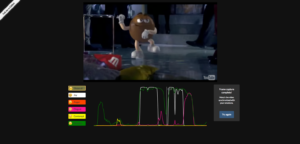

Armed with the SDK, I started looking for cool ways to integrate it. After hearing about the application of Affectiva’s software to ad testing, I became interested in YouTube. Why not track your emotional reaction to any video you watch, not just ads? YouTube seemed like the closest thing to a universal video repository, so I started looking into their player and data APIs. Soon, I was able to implement a search feature and dynamically control a YouTube player, even in the face of poor Internet connection and buffering issues. I borrowed the UI from the game demo, rendering the emotions chart using d3.

Screenshot of YouTube demo with all emotions displayed on graph.

Naturally, the next step was to use the SDK with a live video or stream such as Twitch or YouTube Live. For both of these platforms, I found endpoints to read the top current streams, and for each stream I was able to get both the corresponding video and a chat embed. I searched around for examples of dynamic charts and found that CanvasJS worked quite nicely. By discarding a fraction of the data once the emotion lines reached the end of the chart, I achieved a scrolling effect that matched the real-time nature of the media itself.

Screenshot of Twitch demo with all emotions displayed on graph.

Beyond its emotion-analysis capabilities, Affectiva’s SDK can also match your facial expression with an emoji. I recreated Facebook’s floating emojis for Live Videos, but instead of taps or clicks, your facial expressions generate different emojis. Using Paper.js and anawesome library of emojis I found online, I was able to overlay a stream of happy, smirking, sad, surprised, and angry emojis on a video. However, because there was no convenient endpoint to pull current Live Videos, I resorted to a previously recorded Live Video for my demo.

Screenshot of Facebook demo with joy and surprise emojis firing.

In addition to the APIs and demos I mentioned above, I created a fun way to search Giphy by emotion and tried filtering photos from my Facebook profile by emotion. I again used CanvasJS to render the “emoji wheel” in the Giphy demo.

So far I’ve only listed a handful of individual use cases, but there are endless possibilities of emotion-enabling applications in the web browser. One can imagine thousands of viewers watching the same stream and a server that aggregates and calculates the average sentiment of everyone watching. Emotions can enhance social media, social networking, games, and many other web-based activities. So what are you waiting for? Give the Javascript SDK a try and start developing your own emotion-enabled applications today!